When I build new products from concept to launch, I ask my teams to adopt the design thinking process. For example, when I built a product called Castlight Action, the first several weeks of the project was devoted to listening to benefits leaders from companies such as Walmart, and Mondelez to understand their pain points around health benefits administration and communication. The next several weeks were devoted to coming up with a hypothesis, concept story creation, rapid prototyping, concept validation with targeted users followed by detailed definition, design and development. The product was a commercial success when we launched. Hundreds of customers adopted it and it made millions in revenue every year for Castlight Health. In this project, there was a dedicated team of 70 people with a very clear goal of creating a commercially successful product.

However, when there is no major program with an ambitious goal, applying the design thinking process became sporadic and inconsistent. Thanks to the efforts of Vince Loomba, head of product operations, Castlight Health had a very robust quarterly planning process for development projects. However, systematic innovation that is sustainable, became particularly hard. Innovation did happen during quarterly execution. But it happened in a hidden factory, led by individual initiative, where progress was hard to track and success hard to measure. Product managers were more focused on getting executives and engineering to invest in their ideas rather than identifying products and features that bring value for customers and become a commercial success. When specific functionality was approved for investment in a quarter, designers and user researchers were given limited time and shorter notices to test the concept and do detailed design. Ruta Raju, Castlight’s head of design at that time pointed out to me that innovation and design projects should be treated as separate projects with specific deliverables rather than hurried predecessors of development projects. I agreed with his observation and promised to work on it.

I also noticed that in some companies I knew, the executive team and product managers decided to build and release major functionality to customers with little or no customer co-innovation session or concept testing with targeted real users. Such products were not used by customers and months of investment was wasted.

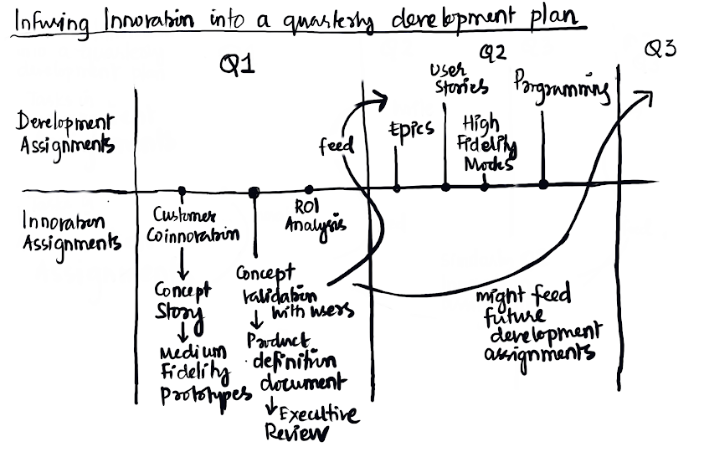

When I had an opportunity to rethink the product management process at Jemstep, I requested my colleague Jason Kim, Director of product operations to separate innovation assignments from development assignments. I suggested that, apart from the regular development assignments for a quarter, we have a set of innovation assignments with specific deliverables that will become candidates for development assignments in the subsequent quarter. I also requested product managers and designers to plan their time commitments for such assignments every quarter. Jason then created detailed and separate project plans for development assignments and innovation assignments. I identified the skills needed and the tools we need to invest in to support this process. Product managers were trained on specific skills and supported when necessary.

A. Quarterly Innovation Assignments

For innovation assignments, product managers and designers worked together to do the following.

Create concept stories with low fidelity mockups.

Conduct customer co-innovation sessions to validate hypothesis.

Create Medium fidelity prototypes of proposed functionality

Conduct concept testing with real users

Calculate the return on investment for large innovation objectives.

Review the solution with internal teams including executive sponsors

For example, when we chose to invest in Individual Retirement Accounts for our robo advice product at Jemstep, Laura Lewison, the lead product manager responsible, did a return on investment analysis for the functionality and had the numbers ready for executive review of quarterly investment. She had co-innovation sessions with our customers to validate the need. She also created medium fidelity prototypes that simulated the functionality completed and tested the concept with real users.

Such innovation projects were given a full calendar quarter. They were treated like any other quarterly objective. The key difference was that the deliverables were user-validated concepts with fully developed medium fidelity prototypes. These deliverables and insights were then used to determine if and when we should invest in developing such functionality.

Every functionality we developed was put through a concept validation test with carefully chosen end users. For example, we could choose users in their forties, living in Texas, who have an individual retirement account, and a bank account to validate a concept. All this can be done in a matter of minutes and insights can be derived in a matter of hours. We used UserTesting.com to recruit users and conduct such validation. When possible, product managers met customers and validated the concepts as well.

B. Quarterly Development Assignments.

For Development projects product managers created product definition documents, epics, user stories, acceptance criteria, and test cases. Designers created high fidelity mockups. Engineers programmed the functionality and demoed their progress every week.

Skills required for such an approach

Because every product and capability was required to go through this framework, product managers and designers will have to learn specific skills. The skills required to perform these tasks are customer co-innovation skills, ability to create a concept story with low fidelity mockups, ability to create a medium fidelity prototype that explains the user journey, ability to conduct moderated and unmoderated concept validations using a service such as UserTesting.com, and the ability to write a product definition document.

Challenges a product leader will face

A product leader taking this approach will face the following challenges.

First, you may not get executive support for such investment. To get such support, convey that such an approach leads to the development of high quality products in a sustainable manner, while significantly increasing the chances of success. Unfortunately, not every senior executive understands the importance of design thinking and innovation. There may be good business reasons for that belief. My recommendation is to adapt the process to suit the company or find another company to work for. In today's hyper competitive world, companies that take this approach will most likely beat companies that do not take this approach.

Second, a product leader may face resistance from product managers who may not see some of these tasks as their responsibilities. Product designers may see this as product managers encroaching upon their area of expertise. These are genuine concerns and challenges that will have to be addressed. In my experience, particularly at Jemstep, my colleagues found a way to make this happen. There may be exceptions, which the product leader will have to manage.

Why was this not done before?

The above framework sounds logical and simple enough. So, why is this not implemented more broadly in more companies? I believe that design thinking, concept story creation and user validation functions were considered very specialized and expensive activities. In SAP for example such research was conducted in separate buildings where users were recruited and paid to participate in person. Testing one feature used to cost tens of thousands of dollars. That has changed significantly with the advent of online services such as UserTesting.com. Prototyping tools have evolved significantly in the past few years. Today, it is possible for a skilled product manager and designer to simulate a final product pretty quickly. Product managers and the design team at Jemstep led by David Ma built medium fidelity prototypes for every feature and validated most features with real users before the feature was presented as candidates for development. I know from experience that this is possible and doable.

Taking this approach requires hard work, skill and commitment. However, this is a foolproof way to build commercially successful products that customers value. If there is enough interest, I may expand on this topic a bit more in the future.